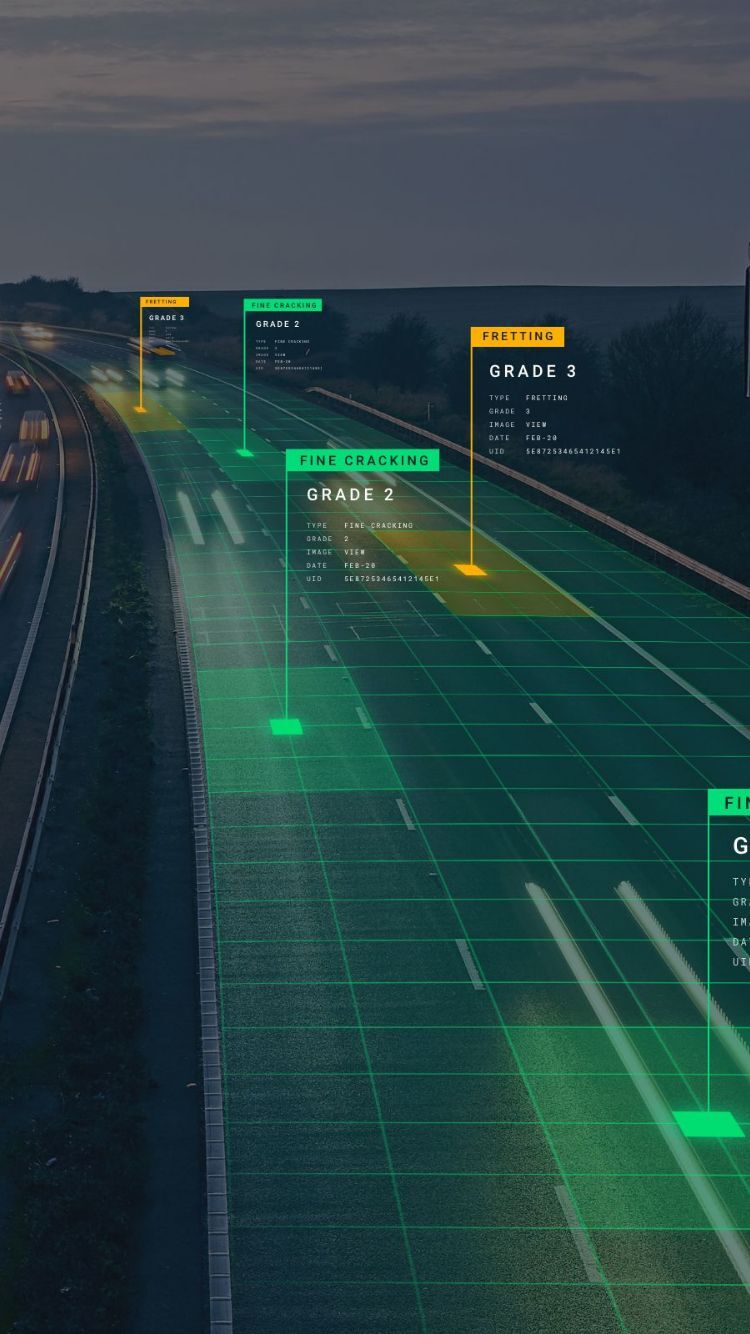

Digitally transforming the real world

via high–definition data capture

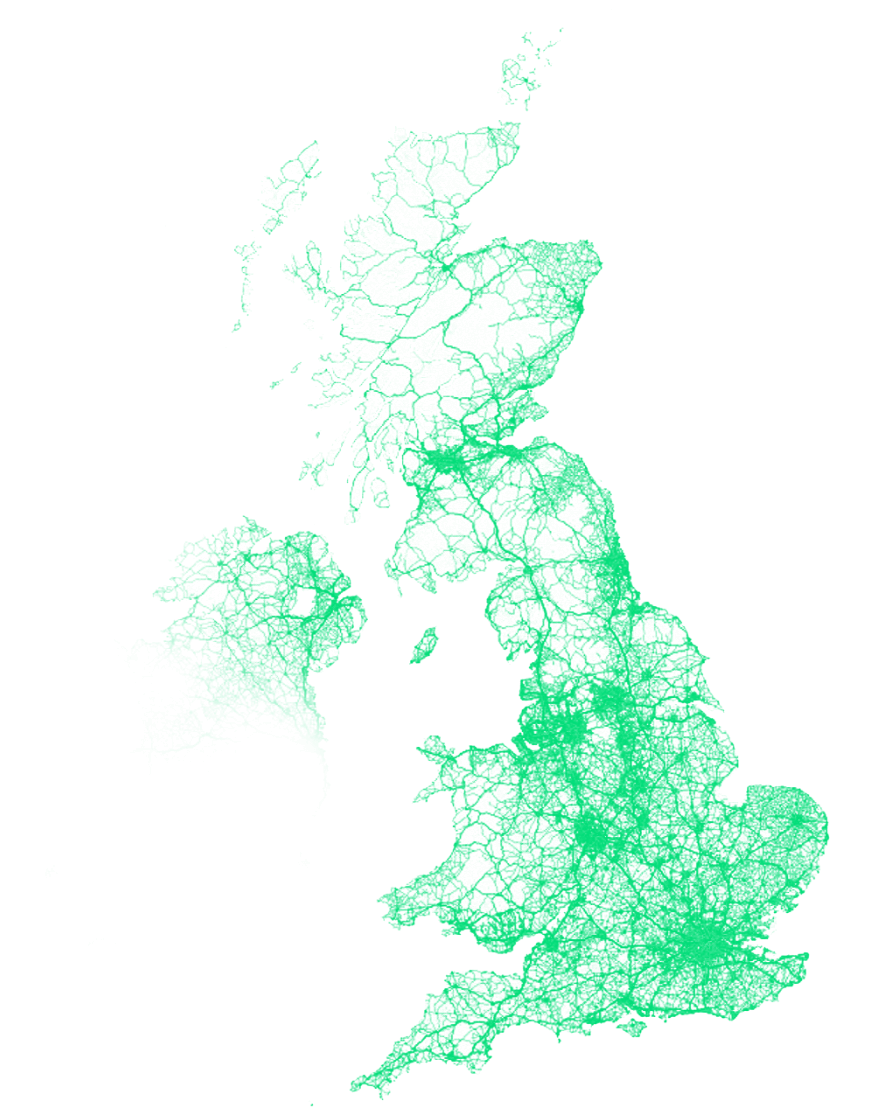

and connected vehicle data

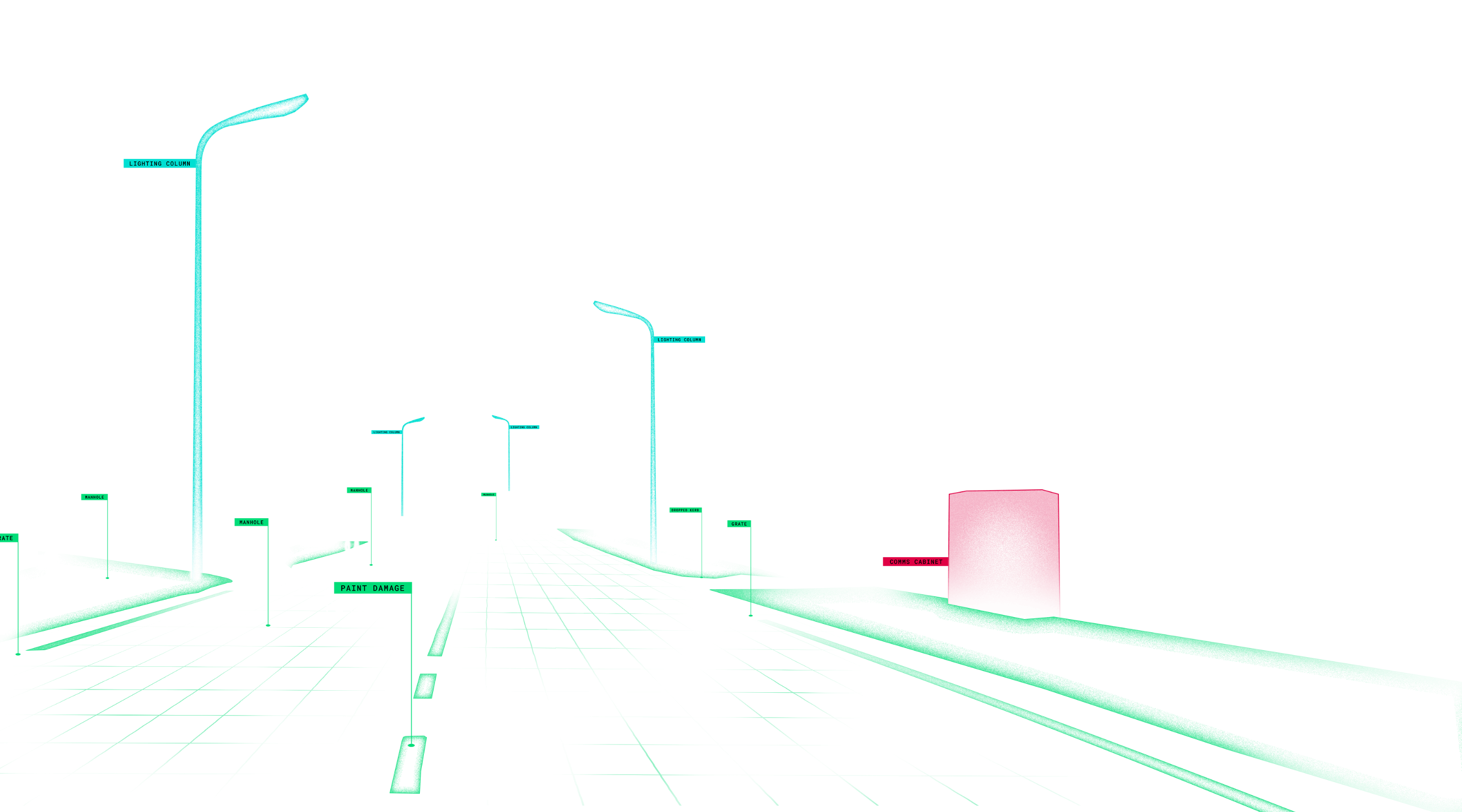

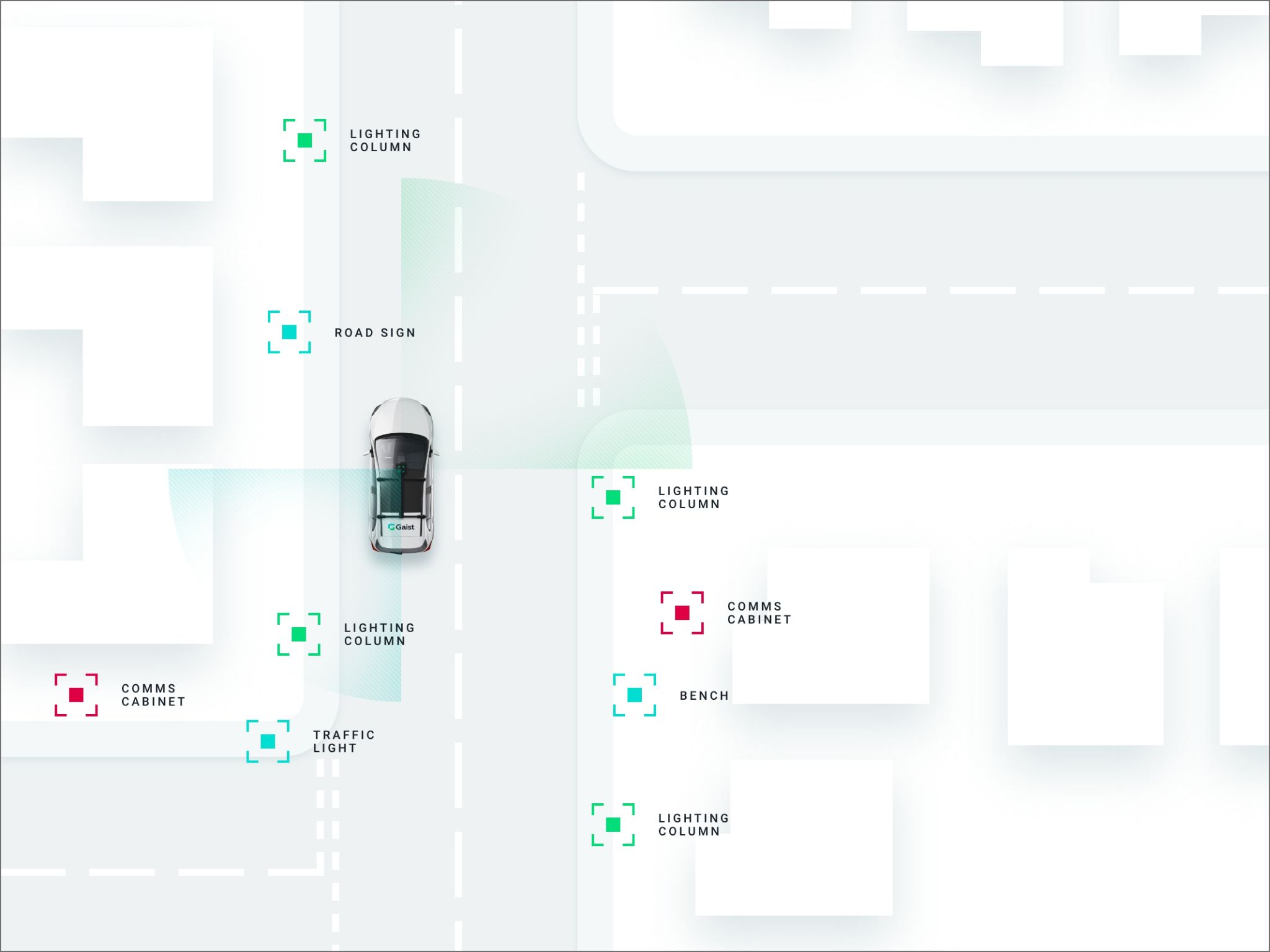

Identifying your roadside assets

and condition of your highways network

Where every detail matters