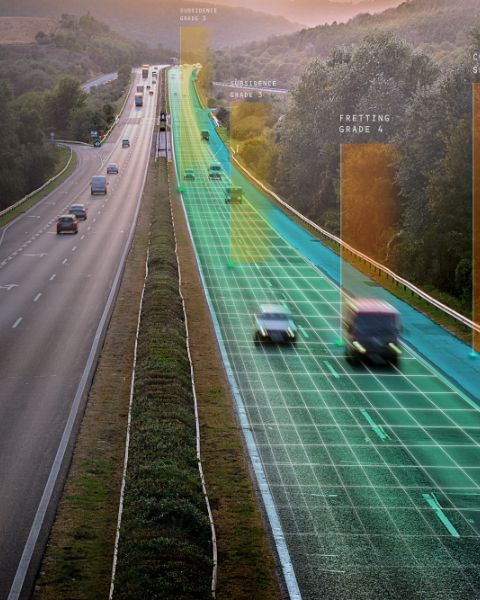

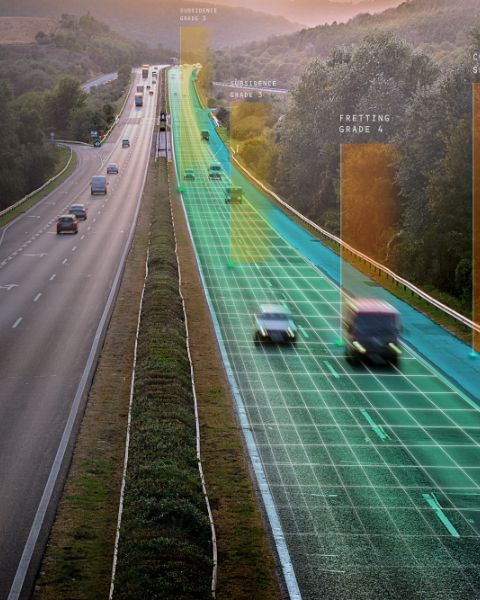

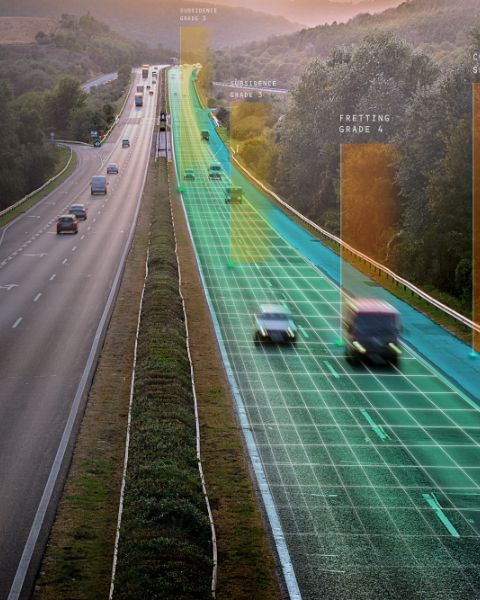

“Many companies collect data but few can turn that data into meaningful action. Our world-class team uses its deep understanding of our clients’ needs to deliver valuable insights that help streamline operations, reduce risk, and cut costs while increasing safety.”

Company Values

At Gaist we believe that relationships matter. We pursue open, honest and constructive relationships with employees, strategic partners and customers.

We demand excellence in everything we do. Through innovation and collaboration we aim to deliver a transformational experience to all those who work with us.

Expertise is key

At Gaist, we have a wide range of cross-sector expertise reflecting a diversity of skillsets and backgrounds. Coupled with a longstanding collaborative approach to delivering our services, this gives us the edge in developing innovative solutions that are focused on solving the problems faced by you, our clients.

We look beyond the norm to provide a service that meet your challenges every step of the way.

Strategic Partnerships

Gaist and TRL work together to solve global roads challenges

Gaist are shape the future of transport in this tie-up with Smart Mobility Living Lab

Gaist transform UK winter service provision in partnership with Safecote

Gaist’s roadscape intelligence data is made available through the Confirm platform

Road Safety takes a leap forward in this partnership between Gaist and Aisin

Research & Innovation

Gaist have a reputation for developing highly intelligent systems that challenge legacy technology and methodologies. We employ the next generation of AI within our award winning platform to deliver the ultimate solution.

We promote innovation via an ecosystem of collaborative engagements with technology start-ups who have access to our rich data sets to develop the transport and mobility apps of tomorrow.

Meet the team

The focus on customer experience, innovation and delivery of leading technology is driven by the Gaist Board, who have extensive experience of delivering successful global technology solutions.